The Amodei siblings leading Anthropic clash with the White House over AI safety

Dario and Daniela Amodei, who are Jewish, are heading the AI giant as it sues the Department of Defense over its ‘supply-chain risk’ label

David Paul Morris/Bloomberg via Getty Images

Dario Amodei, co-founder and chief executive officer of Anthropic, left, and Daniela Amodei, co-founder and president of Anthropic, during the Bloomberg Technology Summit in San Francisco, California, US, on Thursday, May 9, 2024.

Siblings Dario and Daniela Amodei are like countless other Americans who have built a family business together. Except that the business they have built is artificial intelligence giant Anthropic, one of the fastest-growing companies in America — and in the five years since they left cushy jobs at rival OpenAI to start it, they have each amassed billions in wealth.

From the beginning, the Amodeis have said the principle driving their approach to Anthropic is safety. They think other AI companies are not paying enough attention to safety risks as the technology’s capabilities grow each day. Until now, that ethos has been a point of curiosity for the consumers playing around on the company’s Claude chatbot, and a selling point for the businesses spending large sums of money to employ Claude in enterprise settings.

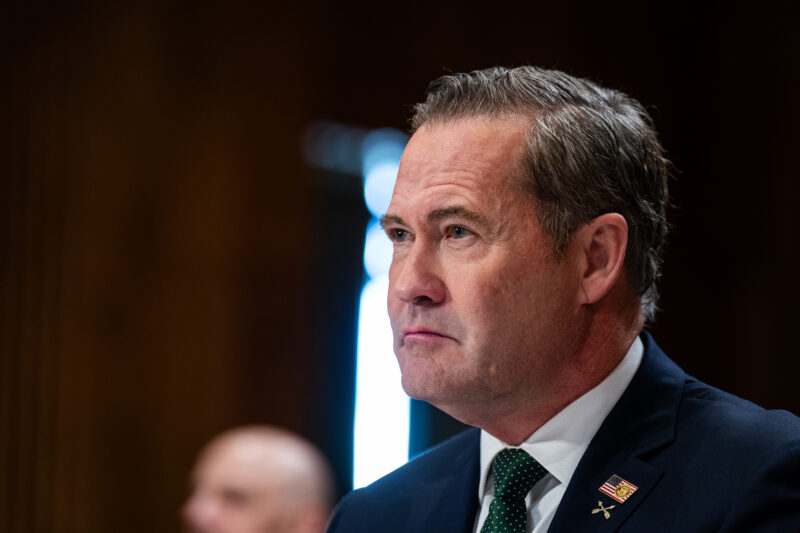

But a dispute over Anthropic’s stated commitment to safety has now put the company squarely in conflict with the Trump administration. On Monday, Anthropic sued Secretary of Defense Pete Hegseth, Secretary of State Marco Rubio and several other Trump administration officials over Hegseth’s decision to designate Anthropic a national security “supply-chain risk” last month, after the company told the Pentagon that it would not allow its technology to be used for mass domestic surveillance or in fully autonomous weapons.

“I believe deeply in the existential importance of using AI to defend the United States and other democracies, and to defeat our autocratic adversaries,” Dario Amodei said in February. “However, in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values. Some uses are also simply outside the bounds of what today’s technology can safely and reliably do.”

Amodei now finds himself facing off against President Donald Trump — an uncomfortable position for the CEO of a company that actively has hundreds of millions of dollars in contracts with the federal government, and that has said it wants to continue those working relationships. The complaints allege that the federal government’s actions targeting Anthropic go beyond what is legally allowed according to the supply chain statutes, and that the Trump administration is ideologically motivated in targeting Anthropic. (A Department of Defense spokesperson has said the organization does not comment on ongoing legal matters.)

Defense Secretary Pete Hegseth is “within his right to cancel the contract. But I think that the people in the Department of War, they’re trying to turn the screw. They’re trying to make it tough for Anthropic to survive,” Will Rinehart, a senior fellow at the American Enterprise Institute who researches tech policy, told Jewish Insider. “It’s one thing to say, ‘Hey, we no longer want to work with Anthropic.’ But it’s quite different to basically use this supply-chain risk designation and to then go after Anthropic, because that [designation] was developed to be basically weaponized against China.”

Dario and Daniela Amodei grew up in San Francisco with parents who wanted them to be engaged with the world. Their mother, Elena Engel, is Jewish, and she worked to build libraries in the Bay Area. Their father, Riccardo Amodei, was a leathersmith from Italy.

“They gave me a sense of right and wrong and what was important in the world,” Dario Amodei told journalist Alex Kantrowitz in 2025. They “imbu[ed] a strong sense of responsibility.”

Amodei initially went into the hard sciences. He went to college at Caltech, where he authored a memorable op-ed in the campus newspaper calling on his fellow students — including in the sciences — to be more engaged with world politics, and in particular to speak out against the Iraq war.

“We are distracted not by the sex scandals and sensationalism of the rest of the world, but by problem sets, computer games and bizarre arguments about the availability of donuts. We, who have so much power to influence the future, have bafflingly renounced our right to it,” Amodei wrote in 2003. “We have the privilege and duty to preserve the ethical integrity of our community, our nation and humanity.”

It’s an ethos he brought with him when he transferred to Stanford, and then to Princeton, where he earned a PhD in physics. Early in his career, he began working on AI technology. He worked as a deep learning researcher at Google, before spending nearly five years at OpenAI, rising to become its vice president of research.

Daniela Amodei, meanwhile, did not come from the STEM world. She worked in politics for a period, first on the campaign and then in the congressional office of former Rep. Matt Cartwright (D-PA). Then she worked for the payments company Stripe before joining OpenAI two years after her brother.

Over time, after raising concerns about the likely impacts of AI, Dario Amodei decided that the leaders of OpenAI were not taking his critiques as seriously as he hoped. So he and Daniela left the company to start Anthropic, where he is CEO and she is president.

Since then, Anthropic has been wildly successful. It launched Claude a year after OpenAI launched ChatGPT, which was the first time most people outside of the tech world began to use and understand generative AI. And while Claude has a fraction of the active users as ChatGPT, the company primarily focuses on selling its technology to businesses.

Even as Claude has grown, the Amodei siblings still talk about the potential dangers of AI. Dario Amodei famously said in 2025 that the tool could erase half of all entry-level white collar jobs in the next five years.

“I’m incredibly optimistic about the technology,” he said in December. “But nothing that powerful doesn’t have a significant number of downsides.”

Anthropic has framed itself as an advocate for greater safeguards on the technology. Amodei came to Washington in September to speak about the topic as the company doubles down on its lobbying efforts. The group plans to donate $20 million this year to congressional candidates who want to regulate the AI industry — via a political group that was created in direct opposition to fundraising efforts being supported by OpenAI.

“We’ve seen lots of bad things: We’ve seen teenagers being driven to commit suicide by LLMs,” Amodei said. “We can imagine much larger-scale catastrophe. So the thing we’ve always advocated for is basic transparency requirements around models.”

For a company that is so upfront about its values, a test of those battles was inevitable. But no one expected the foe to be the Pentagon.

Please log in if you already have a subscription, or subscribe to access the latest updates.

Continue with Google

Continue with Google

Continue with Apple

Continue with Apple