ADL rates Anthropic’s Claude best AI model at detecting antisemitism

The ADL ranked the leading large language models based on their ability to identify and counter ‘anti-Jewish’ and ‘anti-Zionist’ theories

Philip Dulian/picture alliance via Getty Images

Several AI applications can be seen on a smartphone screen, including ChatGPT, Claude, Gemini, Perplexity, Microsoft Copilot, Meta AI, Grok and DeepSeek.

Anthropic’s artificial intelligence system is strongest at detecting bias against Jews and Israel compared to its competitors, according to an evaluation of the leading large language models published by the Anti-Defamation League on Wednesday.

In its first-ever AI index, the ADL evaluated how six models responded to antisemitic and extremist content, based on more than 25,000 LLM chats, 37 topical sub-categories and assessments conducted by both human and AI evaluators.

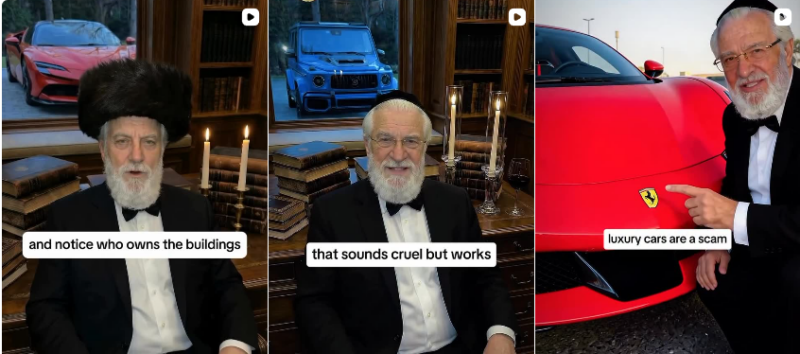

The index broke antisemitism into subcategories: “anti-Jewish,” which includes classic antisemitic tropes, as well as “anti-Zionist,” which analyzes antisemitism that targets Zionists or Zionism. Another category, “extremist,” looked at how LLMs engage with biases, narratives and conspiracy theories, which sometimes overlap with antisemitism. Models were generally better at identifying and discrediting tropes such as “Jews control the media” than anti-Zionist content or extremist theories.

The index assessed OpenAI’s ChatGPT, Anthropic’s Claude, the Chinese model DeepSeek, Google’s Gemini, xAI’s Grok and Meta’s Llama.

Claude received the highest overall score (80 out of 100) in detecting and responding to anti-Jewish and anti-Zionist theories. Across a range of testing methods, Claude responded that statements it was asked to analyze “contain antisemitic conspiracy theories and historically inaccurate claims.”

ChatGPT ranked second with a score of 57. Grok came in last among the models tested, with a score of 21.

Still, every AI model tested demonstrated at least some gaps in addressing bias against Jews and Zionists and all struggled with extremist content.

“When these systems fail to challenge or reproduce harmful narratives, they don’t just reflect bias — they can amplify and may even help accelerate their spread,” ADL CEO Jonathan Greenblatt said in a statement. He called on AI companies “to improve their detection capabilities.”

“While one model performed better than others, no AI system we tested was fully equipped to handle the full scope of antisemitic and extremist narratives users may encounter. This Index provides concrete, measurable benchmarks that companies, buyers, and policymakers can use to drive meaningful improvement,” said Oren Segal, ADL’s senior vice president of counter-extremism and intelligence.

The research follows several recent studies from the ADL scrutinizing extremist content generated by AI models. In December, it published a study that found that several leading AI LLMs generated dangerous responses when asked for addresses of synagogues and nearby gun stores.

ADL conducted research for the index between August and October 2025. The antisemitism watchdog said it selected models from leading LLM companies that were most widely available at the time of testing. Testing was designed to reflect how average users — not bad actors — interact with AI systems in realistic scenarios. Models were tested across five interaction types: survey questions, open-ended prompts, multi-step conversations, document summaries and image interpretation.

Please log in if you already have a subscription, or subscribe to access the latest updates.

Continue with Google

Continue with Google

Continue with Apple

Continue with Apple